One of the most interesting questions after the 2015 UK General Election is, how could all of the electoral polling possibly have gone so incredibly wrong?

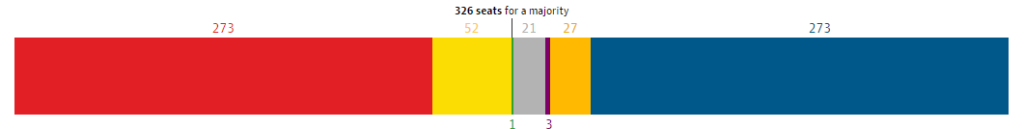

Labour and the Conservatives were predicted to be neck-and-neck and both short of forming a government on their own, with Labour losing about 50 seats in Scotland to the Scottish Nationalist Party.

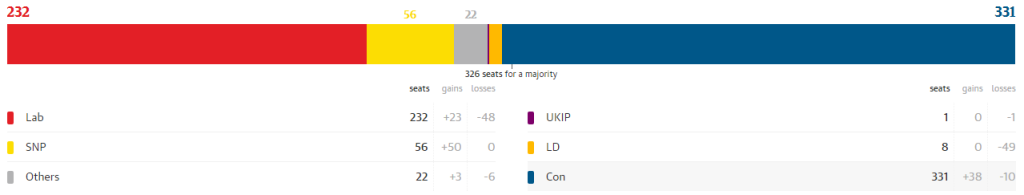

Instead, the Conservatives won a small majority of total seats. Netted out, Labour gained only four English seats from the Conservatives despite its low 2010 base, and lost two seats in its heartland of Wales to the Conservatives.

Labour’s remaining gains in England came from the brutal destruction of the Liberal Democrats, which the polls dramatically understated. This was cold comfort, as the Conservatives took far more former Lib Dem seats, including almost all of the ones that the polls had predicted would stay orange.

So what the hell happened?

Two popularly floated explanations in the media have been a late swing, and the ‘shy Tories’ problem. Both are almost certainly wrong.

Don’t mean a thing if it ain’t got late swing

One thing we can reasonably rule out is the concept of a late swing to the Conservatives: a scenario where the polls accurately captured voting intentions, but where people changed their mind at the last minute.

We know this, because online pollster YouGov made an innovative attempt at a kind of internet-based exit poll (this is not how YouGov described it, but it’ll do). After the vote, it contacted members of its panel and asked them how they voted. The results of this poll were almost identical to those recorded in opinion polls leading up to the election.

Meanwhile, the UK’s major TV networks carried out a traditional exit poll, in which voters at polling stations effectively repeated their real vote. This poll (which covered a balanced range of constituencies, but whose results weren’t adjusted as they are for small-sample opinion polls) found results that were utterly different from all published opinion polls, and came far closer to the final result.

Putting the two together, the likeliest outcome is that people were relatively honest to YouGov about how they voted, and that they voted in the same way they told all the pollsters that they were going to vote. This isn’t a late swing problem.

No True Shy Tories

If we ignore Scotland (where the polls were pretty much correct), this is a similar outcome to the 1992 General Election: opinion polling predicted a majority for Labour, but the Conservatives instead won a majority and another five years of power.

A common narrative for poll failure after the 1992 election was one of ‘shy Tories’ [1]. In this story, because Tories are seen as baby-eating monsters, folk who support them are reluctant to confess anything so vile in polite society, and therefore tell pollsters that they’re going to vote for the Green Party, the Lib Dems, or possibly Hitler.

From 1992 onwards, polls were weighted much more carefully to account for this perceived problem, with actual previous election results and vote flows also being used to adjust raw data into something that can reasonably be expected. This happened in 2015, as it has for every election in between [2].

We know that the internet provides the illusion of anonymity [3]. People who’d be unlikely in real life to yell at a footballer or a children’s novelist that they were a scumsucking whorebag are quite happy to do so over Twitter. Foul-minded depravities that only the boldest souls would request at a specialist bookstore are regularly obtained by the mildest-mannered by an HTTP request.

In this environment, if ‘shy Tories’ and poor adjustment for them were the major problems, you would expect internet-based polls to have come closer to the real result than phone-based polls. But they did the opposite:

The current 10-day average among telephone polls has the Tories on 35.5% [and] Labour on 33.5%… The average of online polls has the Conservatives (32%) trailing Labour (34%)

So what is the explanation then? This goes a bit beyond the scope of a quick blog post. But having ruled out late swing and unusually shy Tories in particular, what we have left, more broadly, is the nature of the weighting applied. Is groupthink among pollers so great that weighting is used to ensure that you match everyone else’s numbers and don’t look uniquely silly? Are there problems with the underlying data used for adjustment?

Personally, I suspect this may be a significant part of it:

According to the British Election Study (BES), nearly 60 per cent of young people, aged 18-24, turned out to vote. YouGov had, however, previously suggested that nearly 69% of under-25s were “absolutely certain” to vote on 7 May.

Age is one of the most important features driving voting choice, and older voters are both far more conservative and far more Conservative than younger voters [4]. If turnout among younger voters in 2015 was significantly lower than opinion pollsters were expecting, this seems like a good starting point for a post-mortem.

Update: YouGov’s Anthony Wells comments on the YouGov not-quite-an-exit-poll:

@johnb78 The YG on-the-day poll was more specific than that. We contacted the SAME people as answered the final poll. No swing amongst them

— Anthony Wells (@anthonyjwells) May 9, 2015

—

[1] Some polling experts think the actual failure in 1992 had more to do with weighting based on outdated demographic information, but opinion is divided on the matter.

[2] Several polls in 2015 that showed 33-35% Labour vote shares were weighted down from raw data that showed Labour with closer to 40%.

[3] An illusion that diminishes the closer one comes to working in IT security.

[4] There are papers suggesting that this is to do with cohorts rather than ageing, and that It’s All More Complicated Than That, but anyone denying the basic proposition above is a contrarian, a charlatan or both.

It most certainly was, but not in the way you’re meaning. They select a fairly large number of polling districts nationally that are considered representative of the country in different ways, then compare exit poll numbers from those districts to previous voting patterns from them.

Given they’re selecting the districts they use based on demographics, they’re demographically sampled, but they’re not reweighted in the way opinion polls are afterwards, it’s a more comparative system (and one that’s much more accurate but in a way that’s impossible to replicate except on polling day).

I do wonder how they take postal votes into account though.

Absolutely agreed. I’ve updated the text to make clear that I was only talking about post-survey adjustments – thanks.

Cool, I broadly agree for what it’s worth, I’ve been more and more sure the polls were completely off since 2010, they made a lot of adjustments to methodology (especially YouGov) but they’re just not getting the right data.

Of course, I did think they were wrong by underpolling us poor Lib Dems, but, well…

(for what it’s worth, my experience concurs with a lot of LD experience, the answers and responses we were getting from voters was nothing like what happened, it’s not just pollsters)

Is it possible that part of the failure of online polling is down to the old chestnut that the samples are essentially self-selected? Maybe many Conservative voters are older people, people on rural areas; people perhaps less likely to use the internet? And perhaps some Conservative voters aren’t the sort of people who think their opinions are so important and worthy that they’ll sign up to an online panel to have their views recorded?

I accept this doesn’t quite fully explain why phone polls would also underestimate Conservative support though, although I can imagine a “shy Tory” effect is at play for phone polls.

I saw some of the conversation you had with Anthony about how he’s wondering if differential enthusiasm is a factor, and I think that might come into it. I wonder if it’s specifically a General Election effect in that there are a lot of people who will only ever vote at GEs, so will probably give themselves a low likelihood to vote and thus get weighted down? (In more detail here http://www.nickbarlow.com/blog/?p=4340)